What Is Anthropic Thinking?

An interview with Anthropic co-founder Jack Clark on the specter of AI-fueled mass unemployment, the future of agents, and how to raise children in an age of super-intelligence

The landscape of AI is not merely filled with news. It is filled with teams. You have the doomers, the accelerationists, the skeptics, the it’s-a-bubble oracles, the anti-bubble counter oracles, and so on. It would be convenient for my sanity—and, perhaps, the sanity of my readers—if I simply joined one team and never removed the jersey. But I don’t think any aforementioned tribe has a monopoly on good arguments. I think the doomers are right about the risk of the technology, and the accelerationists are right about the promise of the technology, and the skeptics are right that the doomers and accelerationists can both overstate their cases.

So I’m trying to make sure that my coverage of AI cuts across silos and allows readers to hear from all of the teams. Last week, I published an interview with the investor and writer Paul Kedrosky on the case for AI being an economic bubble. But if any single data point pierces that narrative, it’s this: Between December 2025 and March 2026, the AI lab Anthropic more than doubled its annual recurring revenue from $9 billion to more than $20 billion. According to several analysts, there is no record of any company growing this fast at this scale … ever. It’s hard to imagine that artificial intelligence is both a bubble and the home to the industry witnessing the fastest-growing businesses in history.

Today’s conversation is with Anthropic co-founder Jack Clark. I don’t need Jack or anybody at Anthropic to read me a corporate statement about the company’s revenue growth. I can do that, myself. What I really wanted to hear from Jack were his answers to the deepest, thorniest philosophical questions about the meaning of AI. Questions like:

If Anthropic’s executives believes that AI might be as dangerous as nuclear weapons, what right does any private business have to build this sort of thing for profit?

If AI is really so good at making people more productive, why do Americans overall say they disapprove of AI more than just about every other institution and individual in the world?

Does Anthropic really believe that AI will lead to imminent mass unemployment?

Why—as Noah Smith recently put it—does this industry insist on “our product will make you economically useless, and possibly kill you!” as a marketing strategy?

Why does AI still seem quite inept at coming up with truly original insights?

How does Anthropic use its own autonomous agents to increase productivity within the company?

If other companies learn to use agents effectively, is knowledge work “cooked”?

How should we raise our children in an age of AI?

And what values would super-intelligence make even more important than they are today?

IF AI IS LIKE A NUKE, WHY SHOULD PRIVATE COMPANIES BUILD IT, AT ALL?

Derek Thompson: Anthropic has compared artificial intelligence to nuclear weapons on several occasions. Most recently in January, Dario Amodei, the CEO of Anthropic, said that the Trump administration’s decision to allow advanced NVIDIA chips to be exported to China was “a bit like selling nuclear weapons to North Korea.”

The US does not allow private companies to build nuclear weapons. That is the law. If artificial intelligence is just like nuclear weapons, why should we allow private firms to build it for profit?

Jack Clark: AI is fundamentally like everything. It’s like a factory that produces cars, micro scooters, animals, and nuclear weapons all at the same time. And the main question we’re going to have to deal with as a society is how do you govern those factories and how do you decide what the appropriate uses are of the things that come out? I can’t talk about the specifics of our ongoing discussions with the Department of Defense. I can say that Anthropic was extremely committed to working on national security early because we recognize that AI is going to touch every single part of life, and every single part of life is going to have its own range of incredibly thorny, difficult issues. We’re going to need a much larger societal conversation about how we govern this technology in general, and we will need to reckon with the fact that the technology comes from the private sector and then flows into all of these other sectors. That’s going to be really challenging. It’s something we haven’t encountered before, because previously you didn’t have a technology that could become anything. You had specific technologies built by specific industries for specific purposes, and that was in many ways simpler.

Thompson: I want to hear a robust defense of why this is the private sector’s job. The nuclear analogy is invoked in so many different ways: for export controls, for arguments about government attention, for arguments about existential stakes, for arguments about the need for international cooperation. But one conclusion this analogy very clearly supports is that the private sector should not control this technology.

So why does the analogy apply almost everywhere except here, where private sectors are developing frontier AI for profit while the government attempts to regulate it from the outside?

Clark: We worked for many years with the National Nuclear Security Administration to actually test out how well AI could understand aspects of nuclear technology. And we used that to develop evals and ways of ensuring that we don’t proliferate things into the world that have an understanding of nuclear weapons. That’s almost a very positive example of how you would have the private sector work with government, where some things absolutely should only be the domain of government, like nuclear weapons. The job of a company producing a technology that can take on many different aspects is to work out the areas where it’s inappropriate to deploy that technology, like nuclear weapons, and then work with government to take that capability surface off. I think that describes some of the path we’re going to have to pursue here. And it’s one that most of the industry is going down, including for biological weapons.

Thompson: So you’re saying the right way to think about this is that AI is this is a multifarious factory technology, where you are creating super-powered Excel charts, which is a technology that has no precedent for government regulation, but you’re also creating technology that can be used by the Pentagon or by individuals to have militaristic or dangerous ends. And so the analogy with nuclear weapons is true insofar as it is contained to the parts of your technology that are like nuclear weapons, but you’re also doing a lot of other things that have no analogy in nuclear weapons, like making white collar workers a little bit more productive at their desk jobs. Is that a fair summary?

Clark: There are almost two problems here. One is that you have this factory that can produce anything, so you make sure that what comes out correlates to what we’ve decided society can have available in the free market. Not nuclear weapons. Yes, things that accelerate knowledge workers. And then you have this second question of, given the multifaceted nature of what can be produced, how do you work with government or academia or other parties on the things which you can’t push out to the world in general, but which have value in the rest of the world? An example here is biology. For [technology] that can massively accelerate the development of biological science, you need to work out: what is the path to bringing that technology about? So some of the conversation that society is going to have now is, what are the appropriate ways we want this technology to be used, and how do people decide what to do with different things in this factory, and how to proliferate them, so society gets the benefit?

ANTHROPIC’S UNUSUAL MARKETING PITCH: OUR PRODUCT MIGHT DESTROY YOUR JOB—OR THE WORLD

Thompson: Anthropic CEO Dario Amodei has predicted on several occasions that AI will destroy half of all entry-level white collar positions and spike unemployment to as high as 20%, which would be the highest unemployment rate since the Great Depression. He said this could happen in as soon as five years. Do you agree with that forecast?

Clark: We’re talking about one of the potential things that can happen, and I think it’s worth noting that this is a choice. I don’t agree with this, because I think it’s a choice that we can make. Also, my personal view based on the data I look at is that big changes in employment take a long time to filter through to the economy. And even with the magnitude of what we’re talking about, you might expect it to take longer.

Let’s say there is the potential for massive employment changes. I think that this is accompanied by the fact that AI must also be growing the economy a lot and causing a lot of economic activity. If that is the case, then you would expect more freedom about [designing] policy and [deciding] what we do with this economy. If you end up in a situation where employment is negatively affected by AI in one part of the economy, but loads of money being generated by AI in another part of the economy, you could choose to create jobs, like teaching or nursing, where we have a preference for more people working. You could both increase the number of jobs and do things like cross-sector wage subsidies to improve the wages of those jobs, where today we severely under-compensate them.

Thompson: It is unusual in corporate history for a company to announce that if its product is successful, tens of millions of people will lose their jobs and there’s a non-zero chance that we end the human race entirely. I think the analogy I’ve used before is that Henry Ford would have been within the realm of reality if he said, “If this Model T thing takes off, hundreds of thousands of Americans are going to die every decade from car accidents.” In fact, that’s happened. But Ford and GM did not talk like that in the 1910s and 1920s. What is the strategy of communicating your technology to the American people as a means by which we might have 20 percent unemployment and a non-zero chance of human catastrophe?

Clark: These are not the outcomes we want or anyone in the industry wants. But I think the industry has also learned from the overly rosy predictions made by many in the technology industry before, about how the only effect they’d have on the world would be unalloyed positivity. And I think the world lost huge amounts of trust in the technology industry because of that, because they saw that it wasn’t only positive. Social media has caused a range of amazing positives in the world and a range of harms, which we’re now dealing with. The ethos here, and why I’m working on a new initiative called the Anthropic Institute, is to share a lot more data about what we see in front of us so that society is better prepared for any of the different changes which could come along.

We also don’t spend enough time talking about all of the really positive changes, which I think are a choice that we can make as a civilization. But it would be negligent of us to not call out that there are ways we as a species could get this technology wrong. If you look at scientists and people that have worked on transformational technologies before in biology or in the early days of nanotechnology, they’ve all talked about this combination of upsides and risks. It’s just that AI as a sector has matured and made a lot more impact on the markets than either of those classes of technology over the same time period, so everything’s accentuated.

WHY DO AMERICANS HATE AI?

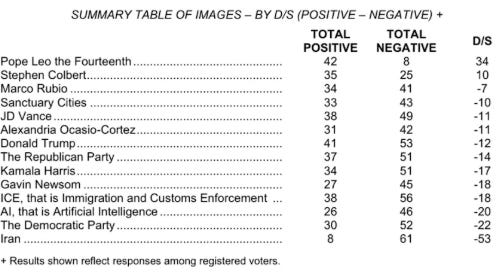

Thompson: I hear the argument that you are reacting to the social media experience. But I also look at polling. Last week, NBC News published a national survey on attitudes toward a range of politicians and institutions. AI’s net favorability was negative 20. That’s below every politician surveyed in the poll and it’s below ICE. Why do you think people say they hate artificial intelligence?